What is Data Science in Python?

Python has positioned itself as the ideal language for data science because of its simplicity of use, readability, and extensive library base.

Appendix

- Python Libraries for Data Science

- Why Python for Data Science?

- Steps involved in Data Science using Python

- Potential career opportunities in Data Science using Python

- Real-life examples of Data Science using Python

- Conclusion

Check out this insightful video on Data Science in Python Tutorial for Beginners.

{

“@context”: “https://schema.org”,

“@type”: “VideoObject”,

“name”: “Python for Data science Training”,

“description”: “What is Data Science in Python?”,

“thumbnailUrl”: “https://img.youtube.com/vi/bNv6hZvLz8s/hqdefault.jpg”,

“uploadDate”: “2023-04-25T08:00:00+08:00”,

“publisher”: {

“@type”: “Organization”,

“name”: “Intellipaat Software Solutions Pvt Ltd”,

“logo”: {

“@type”: “ImageObject”,

“url”: “https://intellipaat.com/blog/wp-content/themes/intellipaat-blog-new/images/logo.png”,

“width”: 124,

“height”: 43

}

},

“contentUrl”: “https://www.youtube.com/watch?v=bNv6hZvLz8s”,

“embedUrl”: “https://www.youtube.com/embed/bNv6hZvLz8s”

}

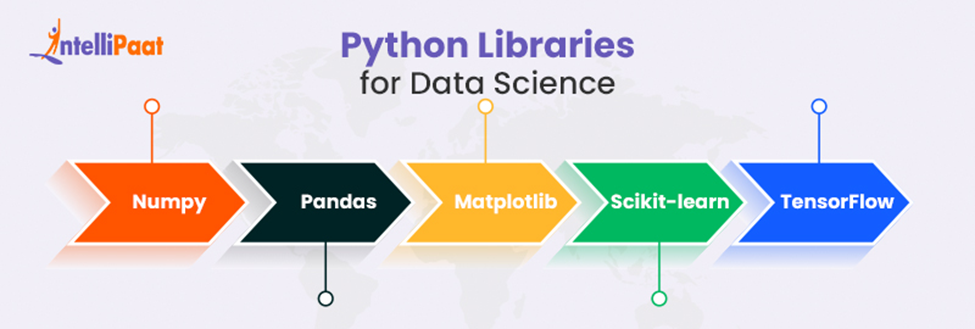

Python Libraries for Data Science

A Python library is a piece of reusable code you can add to your projects and programs. Here, each function carries out a particular action. Let’s have a look at the following library’s functions that allow us to obtain the pre-defined output we need:

- Numpy– The NumPy library makes Python data scientists significantly more effective when working with enormous data sets and volumes of data. Since its functions work like a giant calculator that can compute numerous numbers simultaneously, it also speeds up the process.

- Pandas– It provides easy-to-use data structures and data manipulation tools. This library is the backbone of Python data science work. We can work with Excel files, SQL, and many more.

- Matplotlib– Matplotlib is a library that helps to use data visualization for Python programming language. It helps to create high-quality plots, graphs, charts, and more. We use this library to develop visualizations for scientific research and data analysis.

- Scikit-learn– It is a Popular library for data science for Python. Its uses are for building predictive models and various machine-learning tasks.

- TensorFlow– The TensorFlow library allows users to define and evaluate mathematical expressions involving multi-dimensional arrays and optimize them. Using the TensorFlow library in data science, we can create various applications like image and speech recognition.

Wish to start learning Python for Data Science or upskill yourselves can enroll in a Python certification program Data Science using Python.

Why Python for Data Science?

In the above section, we have seen the various libraries of Python that are used in the Data Science domain. Now, let us know why there is a need for Python in Data Science with the help of the following points:

- It’s easy to learn – Python’s focus on simplicity and readability, Does not limit your functional possibilities.

- Scalability – Among all available languages, Python is the growing language. Python is especially beneficial when data analysis tasks have to be combined with online applications and cloud computing platforms or when they are a component of a more complex endeavor.

- Open Source Libraries – Python has hundreds of different open libraries and frameworks. Several of these resources will be focused on data analytics and machine learning, which you’ll discover as a data scientist. Big Data has a lot of support as well.

- Huge Community– As mentioned before, Python is the most growing language. Studying might be pretty challenging if you receive no assistance from anyone.

New to Data Science using Python? We’ve got you a Python tutorial for beginners, intermediate and advanced learners.

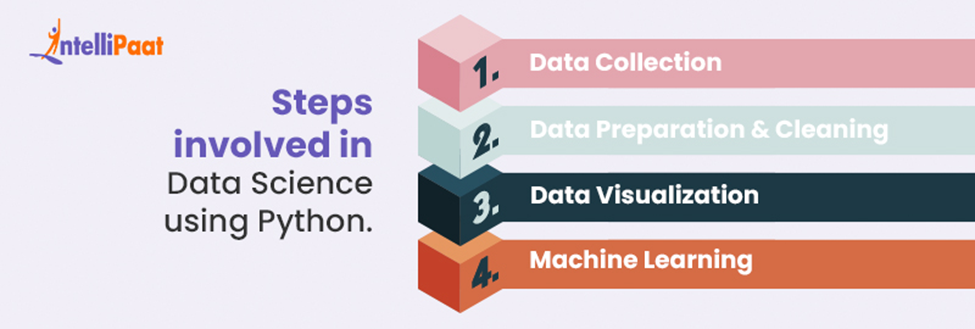

Steps involved in Data Science using Python.

Python has a vast selection of libraries and tools for data processing, visualization, and machine learning, making it a well-liked programming language for Data Science. We would like to see the basic steps that are involved in Data Science using Python:

- Data Collection– This is a process of acquiring data from various sources using Python. Data Collection is a crucial step in the data science workflow, as it determines the quality and quantity of data available for analysis.

- Data Preparation and Cleaning– It refers to transforming data into a usable format for analysis, such as scaling and normalizing data and removing missing values. We can create more efficient, less error-prone, and faster data-driven decision-making using Python for data preparation and cleaning.

- Data Visualization–This process involves Matplotlib and Seaborn to create dynamic visualizations like graphs and charts. Through this process, we can communicate and make data-driven decisions.

- Machine Learning– Machine Learning is a process of building and training models that can learn from data and make predictions. By using Machine learning techniques in data science, we can solve real-life problems.

Potential career opportunities in Data Science using Python

Python is a powerful programming language for data science that enables programmers to quickly detail and precise information applications and models. This makes it an essential tool for data scientists in Today’s highly competitive, data-intensive world.

Data scientists increasingly adopt Python on a large scale because it makes their work quick and simple. There are numerous potential career opportunities in data science Using Python.

- Data Analyst– Analyzing and interpreting large data sets to identify patterns and trends. They use this information to make data-driven decisions for their organization.

- Data Scientist–They work with more extensive data sets to develop predictive models and other analytical tools using data science.

- Business Intelligence Analyst– develops dashboard reports of present data in a way that is easy to understand for business stakeholders, which helps with business decisions.

- Machine Learning Engineer– Machine learning engineer focuses on the development of self-running artificial intelligence (AI) systems that can be used to automate prediction models.

Real-Life Examples Of Data Science Using Python

Data Science using Python has been widely applied in a variety of industries to better decision-making and obtain valuable insight from data. Let’s see some of the following examples of Python-based data science applications in practice:

- Customer Segmentation and Personalized Marketing

Python libraries like NumPy, Pandas, and sci-kit-learn can be used to perform analysis for Customer segmentation and Personalized Marketing.

For example, retail companies use browsing behavior and customer purchase history to cluster customers into different segments using unsupervised machine learning for each component, improving engagement and sales. This could involve sending content to customers based on their browsing behavior or promotions of products.

Career Transition

- Maintenance in Manufacturing

Python libraries like NumPy, Pandas, and sci-kit-learn can be used to perform analysis for Predictive Maintenance in Manufacturing.

For example, Using Data Science in Python, Manufacturing companies can collect sensor data from their machines, such as temperature, vibration reading, and pressure. So before any device fails, companies can identify the problems and save time and money.

- Weather prediction in the Agriculture Center

Weather plays an important role in the agriculture field but because of uncertain weather conditions agriculture sector has to face the loss. Nowadays, data science is changing the way farmers and agriculture professionals make decisions.

Using Data Science in the agriculture sector, farmers can get weather predictions like humidity and type of sky coverage and can take precautionary measures.

- Predictive Analytics in HealthCare

Predictive analytics in the healthcare sector utilizes data, statistical algorithms, and machine learning approaches to predict potential outcomes based on existing data. It advises medical professionals on how to avoid, identify, and manage possible health issues and improve patient products and medical treatments.

If are you looking forward to cracking the Interview in the Data Science field, then do check out Intellipaat’s Data Science Interview Questions and Answers to ace the interview.

Conclusion

Data science is now more critical than ever because, in Today’s generation, information application is essential for our economy. The need for data scientists is expanding along with the value of data. The market is shifting uniquely Today as more people talk about AI and machine learning. Data science, which solves problems by connecting relevant data for later use, aids these emerging technologies.

Courses you may like

If you have any queries related to this domain, then you can reach out to us at Intellipaat’s Data Science Community!

The post What is Data Science in Python? appeared first on Intellipaat Blog.

Blog: Intellipaat - Blog

Leave a Comment

You must be logged in to post a comment.