Training on Low-Code Business Automation with BPMN and DMN

Blog: Method & Style (Bruce Silver)

In recent posts, I have explained why anyone who can create rich spreadsheet models using Excel formulas can learn to turn those into Low-Code Business Automation services on the Trisotech platform, using BPMN and DMN, and why FEEL and boxed expressions are actually more business-friendly than PowerFX, the new name for Excel’s formula language. It just takes a bit of training on the platform.

To that end, I have just launched the beta version of a new course Low-Code Business Automation with BPMN and DMN. The course is an outgrowth of recent client engagements, which seemed to follow a consistent pattern: A subject-matter expert has a great new product idea, which he or she can demonstrate in Excel. A business event, represented by a new row in Excel Table 1, generates some outcome represented by new or updated rows in Tables 2, 3, and so on. The unique IP is encapsulated in the business logic of that outcome. The challenge is to take those Excel examples and generalize the logic using BPMN and DMN, and replace Excel with a real database.

Business Automation Service: The Basic Pattern

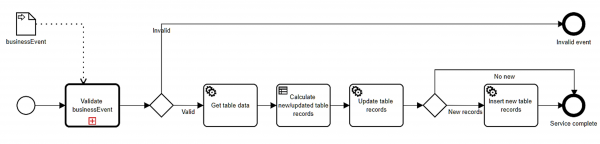

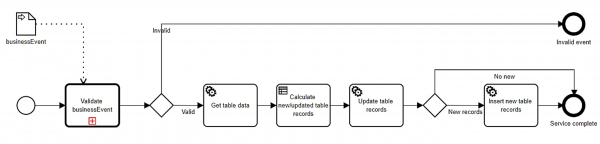

In BPMN terms, a Business Automation service typically looks like this:

Each service is modeled as a short-running BPMN process, composed of Service tasks, Decision tasks, and Call Activities representing other short-running processes. The service is triggered by a business event, represented by a client API call. The first step is validating the business event, which typically involves some decision logic on the business event and some retrieved existing data. If valid, one or more Service tasks retrieve additional database records, followed by a Decision task that implements the subject matter expert’s business logic in DMN. In this methodology, DMN is used for all modeler-defined business logic, not just that related to decisions. The result of that business logic is saved in new or updated database table records, and selected portions of it may be returned in the service response. It’s a simple pattern, but fits a wide variety of applications.

Implementation Methodology

My new training describes a specific methodology for implementing that pattern. It goes like this:

The first step is whiteboarding the business logic in Excel. In many cases, the subject matter expert has already done this! It’s important to create a full set of example business events, and to use Excel formulas – references to cells in the event record – instead of hard-coded values. If you can do that, you can learn to do the rest. It is assumed that the subject matter expert can verify the correctness of the outcome values for each business event.

Now, referring to the BPMN diagram, we start in the middle, with the Decision task labeled here Calculate new/updated table records. A BPMN Decision task – the spec uses the archaic term businessRule task – invokes a DMN decision service. So following whiteboarding, the next step in the methodology is the DMN model that generalizes the whiteboard business logic. I prefer to put all the business logic in a single DMN model with multiple output decisions in the resulting decision service, but some may prefer to break it up into multiple Decision tasks each invoking a simpler decision model. It’s a little more work but maybe easier for some stakeholders to consume. This is in one sense the hardest part of the project, although debugging DMN is a lot easier than BPMN, since you can test the DMN model within the modeling environment. The subject matter expert must confirm that the DMN model matches the whiteboard result for all test cases.

On the Trisotech platform, Decision tasks are synchronized to their target DMN service, so that any changes in the DMN logic are automatically reflected in the BPMN, without the need to recompile and deploy the decision service. That’s a big timesaver. Decision tasks also automatically import to the process all FEEL datatypes used in the DMN model, another major convenience.

Next we go back to the beginning of the process and configure each step. The key difference between executable BPMN models and the descriptive models we use in BPMN Method and Style is the data flow. Process variables, depicted in the BPMN diagram as data objects, are mapped to and from the inputs and outputs of the various tasks using dotted connectors called data associations. Trisotech had the brilliant idea of borrowing FEEL and boxed expressions from DMN to business-enable BPMN. In particular, the data mappings just mentioned are modeled as FEEL boxed expressions, sometimes a simple literal expression but possibly a context or decision table. If you know DMN, executable BPMN becomes straightforward. FEEL is also used in gateway logic. Unlike Method and Style, where the gate labels express the gateway logic, in executable BPMN they are just annotations. The actual gateway logic is boolean FEEL expression attached to each gate.

Database operations and interactions with external data use Service tasks. In Trisotech, a Service task executes a REST service operation, mapping process data to the operation input parameters and then mapping the service output to other process variables, again using FEEL and boxed expressions. The BPMN model’s Operation Library is a catalogue of service operations available to Service tasks. Entries in this catalogue come either from importing OpenAPI files and OData files obtained from the service provider or manually creating them from service provider documentation. We go through the details of all that in the training.

Stock Trading App

In the training, each student builds their own copy of a Stock Trading App – simulated, but based on real-time stock quotes – using three database tables: Trade, a record of each Buy or Sell transaction; Position Balance, a record of the net open and closed position on each stock traded plus the Cash balance; and Portfolio, a summary of the Position Balance table. I provide the whiteboard logic and guide students through the implementation. The real-time stock quotes use the Yahoo Finance API, which requires manually creating the Operation Library entry from service provider documentation.

We use the OData standard to expose database operations as REST APIs in the cloud. OData relies on a data gateway that translates between the REST call and the native protocol of each database. In the training we use a commercial product for that, Skyvia Connect. Each student has their own MySQL database on my website and a Skyvia endpoint linked to it. Students learn how to perform Create, Retrieve, Update, and Delete operations on the three database tables, and how to map process variables to and from the operation parameters.

To learn the Low-Code methodology, it’s important for students to build their own copy of the app themselves. Even though I lead them through the steps, it requires getting every detail right in order to avoid runtime errors. Debugging executable BPMN is an order of magnitude harder than DMN, because you need to deploy the process in order to test it, and instead of friendly validation errors such as you get in the DMN Modeler, you usually are confronted with a cryptic runtime error message. So the training spends a lot of time on testing, debugging techniques, and data validation up front – things developers take for granted but try the patience of most business users. Students who successfully build the app and make a few trades with it are recognized with certification.

At the end of the course, students use my version of the app to trade using a common database, which includes a Leaderboard of trader performance.

Status

The beta version of the training was just launched and should run until November. Having a good knowledge of DMN, at the level of my DMN Advanced training, is a prerequisite, and the beta users have that. Before general availability of the course, I will be offering attractive pricing of a bundle including the DMN and Business Automation training, but I don’t have pricing determined yet.

Also, I expect some enhancements to the Trisotech platform during the next two or three months that will be incorporated into the GA training, which I hope to launch in December or January. In the meantime, you can take a look at the Introduction and Overview here. Please contact me if you are interested in this course or have questions about it.

Leave a Comment

You must be logged in to post a comment.