TIBCO Insight Platform Powered by Artificial Intelligence

Blog: The Tibco Blog

As more and more data is generated, the need to make the “backend” systems smarter becomes critical. Imagine that you are responsible for running the fraud department in a retail bank. With more than $100B worth of credit card transactions and more than 500 million transactions a day there is a lot of data to analyze. Your staff probably works about 10-12 hours a day and are overworked. The fraud systems are flagging a lot of false positives which does not help the situation. However, in the meanwhile, the fraudsters are working 24 hours a day globally and coming up with new ways to explore vulnerabilities in the system.

With so much data, no doubt you will need more computing power. There are some really interesting ways you can ”lease” computing power on demand. We will look at methods on how to do that as part of this article.

Machine learning to help create smarter systems

Let’s have a look at how to design systems that can ingest this much data and use the law of large numbers to provide exponentially better insight from your data. As we have all heard the saying “It takes money to make money”, in the digital economy, “It takes data to make insight.”

Gartner has published the list of Top 10 Strategic Technology Trends in 2017.

Notice that “Applied AI & Advanced Machine Learning” is one of the three things which enterprises are using to get “smarter”. Why is machine learning getting so much focus even though the discipline has existed for 30 years? I think it is because of the explosion of data. Machine learning works on the principle that the more data you provide, the more accurate your models (the math equation) can become. We are now at a point where billions of dollars worth of transactions per day, or billions of interactions with IoT devices per day, is generating enough data to use machine learning algorithms to build better predictive models.

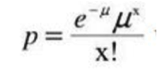

Here is where the rubber meets the road. With more and more data, machine learning platforms can generate better and more accurate math equations from the machine learning algorithms. For example, with a gigabyte of customer behavior data we might get an equation like this to predict “p” (probability for an action):

H2O.ai and machine learning platforms like it are extremely efficient at producing equations like this based on large data-sets. The challenge is, once you have created and operationalized (more later on this) a mathematical model, the equations can become stale because the patterns in the data may change over time. For example, patterns for fraud change and an old equation predicting fraud might not detect new patterns as reliably. What do you do in this scenario? You recreate the equation (using your machine learning platform) based on the new data. This time, your equation might look like this:

As you will quickly realize, you need to create some type of feedback loop to the platform to keep your equations accurately predicting the patterns in the data.

Insight Platform creates a feedback ecosystem

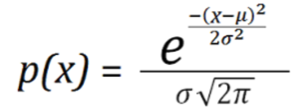

TIBCO Insight Platform does exactly that. It lets you build intelligent models visually, generate actionable insights in real time, and implement the resulting insights as a closed-loop solution with built-in continuous learning.

With the TIBCO Insight Platform, machine learning becomes part of the “journey” and not a siloed exercise. As a digital organization, here is the journey you take to gain better insights from big data:

1. Access data from different sources (also clean and transform with a few clicks)

2. Analyze data

3. Build models using H2O, R, Matlab, etc.

4. Operationalize models by integrating them in your enterprise applications

5. Collect output from the applications and send it back to step 1

Instead of giving the math equations (or Java code) directly to your users, TIBCO Insight Platform can provide them with something which they can actually use to gain better insight.

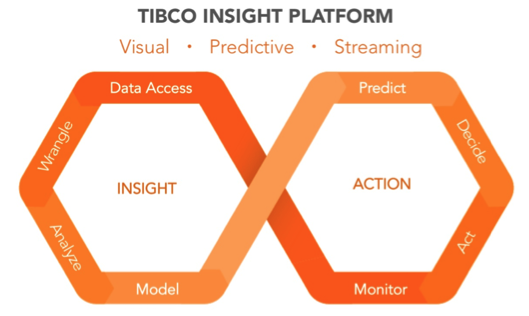

For example, imagine you have generated the equation or model using a machine learning tool (H2O.ai in this case) and now you want to operationalize that data. Here are the steps you will follow:

First, you will use TIBCO StreamBase to run the model against transactional data in a visual model driven environment.

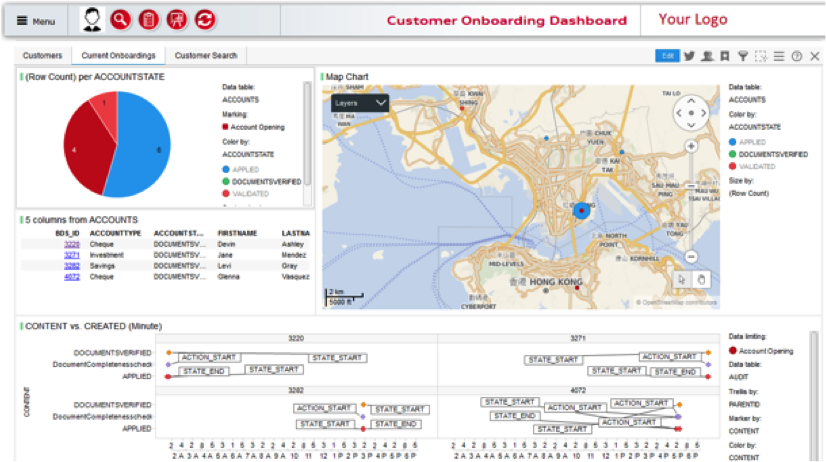

You then send the output of the model (plus the relevant business logic) from StreamBase to the application of your choice (either application A or application B in the above case). The application might look like this:

The output from the “action” in this application can then be sent back to the operational model again (TIBCO StreamBase) which can trigger the re-evaluation of the machine learning algorithm based on the new data output from the application. This feedback loop helps you adapt to the constantly changing environment your business operates in.

TIBCO Insight Platform gives you the framework to access, visualize, analyze, and operationalize data and models; while constantly monitoring and detecting new insights due to changing patterns in the data. In this article we focused mostly on operationalizing and applying machine learning algorithms on the transaction data. Next up, we will look at different aspects of the TIBCO Insight Platform including visualization, live dashboards, and grid computing.

Learn more about Insight Platforms and find out why TIBCO was named a strong performer in The Forrester Wave™: Enterprise Insight Platform Suites, Q4 2016, and try TIBCO Spotfire analytics solution for free here.

Leave a Comment

You must be logged in to post a comment.