.NET : Processes, Threads and Multi-threading

So I’ve been digging around in my evernote to publish something this evening that might be of actual use. My notes tend to be lots of small tid bits that I’ll tag into evernote whilst I’m working on projects and whilst they’re great on their own as a golden nuggets of information that I’ll always have access to, it fails to be useful for use in a blog article.

I did come across some notes from a couple of years ago around threading, specifically threading in the .NET framework…. So as I’ve little time this week to dedicate to writing some new content, I thought I’d cheat a bit and upload these notes, which should go some way in introducing you to threading and processes on the Windows / .NET platform. Apologies for spelling errors in advance as well as the poor formatting of this article. I’ve literally not got the time to make it look any prettier.

So, what is a windows process?

An application is made up of data and instructions. A process is an instance of a running application. It has it’s own ‘process address space’. So a process is a boundary for threads and data.

A .NET managed process can have a subdivision, called an AppDomain.

Right, so what is a thread?

A thread is an independent path of execution of instructions within a process.

For unmanaged threads (that is, non .NET), threads can access to any part of the process address space.

For managed threads (.NET CLR managed), threads only have access to the AppDomain within the process. Not the entire process. This is more secure.

A program with multiple simultaneous paths of execution (concurrent threads) is said to be ‘multi-threaded’. Imagine some string (a thread of string) that goes in and out of methods (needle heads) from a single starting point (main). That string can break off (split) into another pieces of string thread that goes in and out of other methods (needle heads) at the very same time as it’s sibling thread.

When a process has no more active threads (i.e. they’re all in a dead state because all the instructions within the thread have been processed by the CPU already), the process exits (windows ends it).

So if you think about when you manually end a process via Task Manager. You are forcing the currently executing threads and the scheduled to execute threads into a ‘dead’ state. Thus the process is killed as it has no more code instructions to execute.

Once a thread is started, the IsAlive property is set to true. Checking this will confirm whether a thread is active or not.

Each thread created by the CLR is assigned it’s own memory ‘stack’ so that local variables are kept separate

Thread Scheduling

A CPU, although it appears to do process a billion things at the same time can only process a single instruction at a time. The order of which instruction is processed by the CPU is determined by the thread priority. If a thread has a high priority, the CPU will execute the instructions in sequential order inside that thread before any other thread of a lower priority. This requires that the thread execution is scheduled, according to priority. If threads have the same priority however then an equal amount of time is dedicated to each (through time slicing of usually 20ms for each thread). This might leave low priority threads out in the cold however if the CPU is being highly utilized, so to avoid not executing low priority threads, Windows specifically dedicates a slice of time to processing those instructions, but that time is a lot less than given to the higher priority threads. The .NET CLR actually lets the windows operating system thread scheduler take care of managing all the time slicing for threads.

Windows uses pre-emptive scheduling. All that means is that when a thread is scheduled to execute on the CPU then Windows can (if it wants to) unschedule a thread if need be.

Other operating systems may use non pre-emptive scheduling, meaning the OS cannot unschedule a thread if it wants to if the thread has yet not finished.

Thread States

Multi-threading in terms of programming, is the co-ordination of multiple threads within the same application. It is the management of those threads to different thread states.

A thread can be in…

- Ready state – The thread tells the OS that it is ready to be scheduled. Even if a thread is resumed, it must go to ‘Ready’ state to tell the OS that it is ready to be put in the queue for the CPU.

- Running state – Is currently using the CPU to execute its instructions.

- Dead state – The CPU has completed the execution of instructions within the thread.

- Sleep state – The thread goes to sleep for a period of time. On waking, it is put in Ready state so it can be scheduled for continued execution.

- Suspended state – Thread has stopped. It can suspend it’s self or can be suspended by another thread. It cannot be resumed by itself. A thread can be suspended indefinatley.

- Blocked state – The thread is held up by the execution of another thread within the same memory space. Once the blocking thread goes into dead state (completes), the blocked thread will resume.

- Waiting state – A thread will release its resources and wait to be moved into a ready state.

Why use multiple threads?

Using multiple threads allows your applications to remain responsive to the end user, whilst doing background work. For example, you may have a windows application that requires that the user continue working in the UI whilst an I/O operation is performed in the background (loading data from a network connection into the process address space for example). Using multi-threading also gives you control over what parts of your applications (which threads) get priotity CPU processing. Keeping the user happy whilst performing non critical operations on background threads can make or break an application. These less critical, low priority threads are usually called ‘Background’ or ‘Worker’ threads.

Example

If you created a simple windows form and drag another window over it, your windows form will repaint itself to the windows UI.

If you created a button on that form, then when clicked put the thread to sleep for 5 seconds (5000ms), the windows you are dragging over your form would stay visible on the form, even when you dragged it off of your form. The reason being is that the thread was being held up by the 5 second sleep, so it waiting to repaint itself to the screen until the thread resumed.

Implementing multi-threading, i.e. putting a background thread to sleep and allowing the first thread to repaint the window would keep users happy.

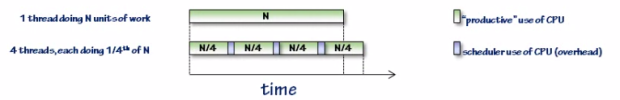

Multi-threading on single core / single processor

Making your app multi-threaded can affect performance on machines that have a single CPU. The reason for this is that the more threads you use, the more time slicing the CPU has to perform to ensure all threads get equal time being processed. The overhead involved in the scheduler switching between multiple threads to allow for processing time slices extends the processing time. There is additional ‘scheduler admin’ involved.

If you have a multi-core system however, let’s say 4 cpu cores this becomes less of a problem, because each thread is processed physically at the same time across the cpu cores. No switching between threads is involved.

Using multiple threads makes code a little harder to read and testing / debugging becomes more difficult because threads could be running at the same time, so they’re hard to monitor.

CLR Threads

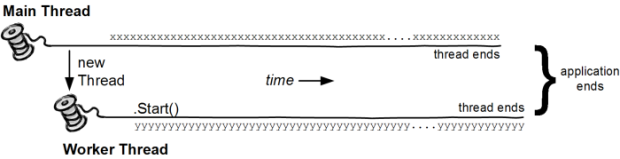

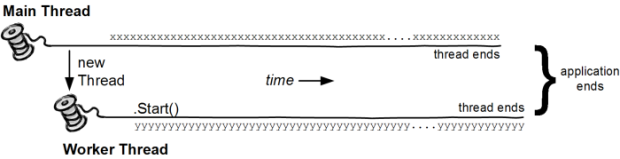

A thread in the CLR is represented as a System.Threading.Thread object. When you start an application, the entry point of the application is the start of a single thread that all new applications will have. To start running code on new threads, you must create a new instance of the System.Threading.Thread object, passing in the address of the method that should be the first point of code execution. This in turn tells the CLR to create a new thread within the process space.

To access the properties of the currently executing thread, you can use the ‘CurrentThread’ static property of the thread class: Thread.CurrentThread.property

You can point a starting thread at both a method that has parameters or a parameterless method. Below are examples of how to start these 2 method types:

- ThreadStart – new Thread(Method);

- ParameterizedThreadStart – new Thread(Method(object stateArg));

ThreadStart example

Thread backgroundThread = new Thread(ThisMethodWillExecuteOnSecondThread);

backgroundThread.Name = “A name is useful for identifying thread during debugging!”;

backgroundThread.Priority = ThreadPriority.Lowest;

backgroundThread.Start(); //Thread put in ready state and waits for the scheduler to put it into a running state.

public void ThisMethodWillExecuteOnSecondThread()

{

//Do Something

}

ParameterizedThreadStart example

Thread background = new Thread(ThisMethodWillExecuteOnSecondThread);

background.Start(“string”);

public void ThisMethodWillExecuteOnSecondThread(object stateArg)

{

string value = stateArg as string;

//Do Something

}

Thread lifetime

When the thread is started, the life of the thread is dependent on a few things:

- When the method called at the start point of the thread returns (completes).

- When the thread object has it’s interrupt or abort methods invoked (which essentially injects an exception into the thread) from another thread that has handled an outside exception (asynchronous exception).

- When an unhandled exception occurs within the thread (synchronous exception).

Synchonous exception = From within.

ASynchronous exception = From outside.

Thread Shutdown

Whilst threads will end in the scenario’s listed above, you may wish to control when the thread ends and have the parent thread regain control before it leaves the current method.

The example below shows the Main() thread starting off a secondary thread to take care of looping. It uses a volatile field member (value will always be checked for updates) to tell the thread to finish its looping.

Then the main thread tells the secondary thread to rejoin the main threads execution (which blocks the secondary thread).

Background vs Foreground Threads

Foreground Thread – A foreground thread if still running will keep the application alive until the thread ends. A foreground thread has it’s IsBackground property set to false (is default value).

Background Thread – A background thread can be terminated if there are no more foreground threads to execute. They are seen as non important, throw away threads (IsBackground = true);

Thread Pools

Threads in the CLR are a pooled resource. That is, they can be borrowed from a pool of available threads, be used and then return the thread back into the pool.

Threads in the pool automatically have their IsBackground property set to true, meaning that they are not seen as important by the CLR and if a parent thread (if is NOT a background thread) ends, the child will end whether complete or not. Threads in the pool work on a FIFO queue basis. The first available thread added to the queue is the first thread pulled out of the pool for use. When that thread ends execution, it is returned to the pool queue. Thread pool threads are useful for non important background checking / monitoring that do not need to hold up the application.

//Creating a new thread from the pool

Threadpool.QueueUserWorkItem(MethodName, methodArgument); //This will be destroyed if the foreground thread ends.

Once a thread is started, the IsAlive property is set to true. Checking this will confirm whether a thread is active or not.

Once a thread is started, the IsAlive property is set to true. Checking this will confirm whether a thread is active or not.