How test and learn delivers value in data-driven marketing

Blog: Capgemini CTO Blog

Gone are the days when marketers made decisions relying purely on intuition and experience. With data and analytics proliferating, marketers are increasingly looking to data to inform every decision, running tests to understand and validate what works and what doesn’t. A test could be as simple as changing the color of a button on the mobile app, or as complex as using different machine learning algorithms to send a personalized offer. In both these cases, tests enable marketers to experiment by applying the change on a small percentage of the overall customer base and measuring the effect.

According to Gartner survey analysis, organizations that significantly outperform their competitors are almost twice as likely to make testing and experimentation a marketing priority. Running tests helps marketers on their journey to drive superior customer experience, growth, and return on investment (ROI). Almost half of companies in an Optimizely survey credited experimentation for driving a 10% uplift in revenue. Even a failed test provides some learning, some insight into customer behavior.

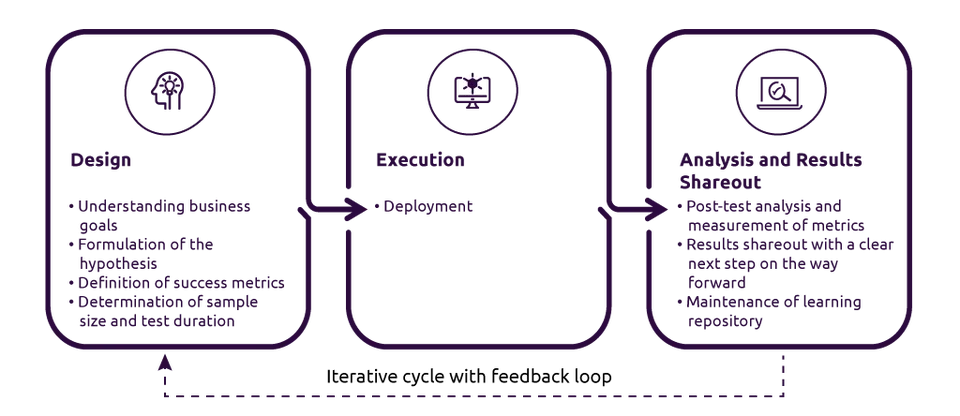

Three steps to good testing outcomes

Running a test that provides valuable learning without impacting the business adversely is a mix of science and art. A well-conceived test involves three steps, as follows:

Design

- Understanding business goals — Goals help to ensure that tests (aka experiments) are aligned to business objectives, which could be increasing customer engagement, improving campaign efficiency, or increasing revenue. They provide structure and scope to experimentation. Goals also enable prioritization when multiple tests are needed but cannot be run simultaneously.

- Formulation of hypothesis — A hypothesis explains clearly “what” change is being made, “why” we want to make the change and the expected outcome. For example, for an ecommerce business, using a more creative email subject line (“what”) might resonate better with its younger customer base (“why”) resulting in an increase in email open rate and higher customer spend (expected outcome). Running the test will confirm or reject the hypothesis. An if-then-because statement is a good way of framing a hypothesis. Insights from prior tests, analysis, intuition, and business knowledge, as well as creative thinking, help create hypotheses.

- Definition of the success metrics and alignment on next steps – It is important to have clear and precise KPIs (primary and secondary), ensuring that different individuals interpret the test results in the same way. This also ensures that the hypothesis is measurable before the test is run. Getting alignment with key stakeholders on the success metrics at the design stage ensures that the learnings from the test will be subsequently used.

- Determination of sample size and duration of the test — A large treatment size (i.e. subjecting the change to a large group of customers) provides greater ability to detect small change at the desired significance level. However, having a large treatment size could be costly if the change being tested is detrimental to the business. For example, using a new algorithm that provides discount-rich, personalized offers to a large customer base will result in lower profitability and poor marketing ROI. Hence, it is essential to determine the minimum sample size that provides a confident (statistically significant) test result. This is done using power analysis, a common practice in scientific experiments that can be implemented using packages available in python and other programming languages.

Execution

- Deployment — This is an operational step to run the test. Care needs to be taken to ensure that the sample size determined during the design phase is adhered to and the right population is exposed to the test.

Analysis and results shareout

- Post-test analysis and measurement of metrics — While every effort may have been taken to ensure that treatment and control (holdout) population groups are truly random, it’s possible some bias might have crept in that needs to be eliminated. Using regression modeling, it is possible to increase the quality of the results (e.g. adjusting for prior spend bias between the treatment group targeted by the marketing campaign vs. a holdout group that was not targeted). For tests that are run over a long period, having a mid-test result readout provides a kill switch if required.

- Results shareout — Quite often, tests are run by teams to identify themselves as being data driven but when the result shows that the hypothesis is incorrect, decisions are still made using the original hypothesis. Having alignment with decision makers at the start on how the results will be used will ensure commitment and proper use of the organization’s resources.

- Maintenance of a learning repository — This includes the goal, hypothesis, treatment and control details, sizing, duration, success metrics, result and next steps involved in the test. In large organizations, as people move in and out of teams, having a learning repository helps new members understand what has worked and what hasn’t, helping in ideation and formulation of new hypotheses for future use.

Creating a test and learn culture

For organizations to truly build a test and learn culture, management support is critical. It takes courage for marketers to acknowledge and work with the unpredictability of human behavior. Great managers recognize this and are focused on outlining goals and providing direction to the team. They also understand the importance of having the right infrastructure and tools to enable employees to run tests at scale with speed. To conclude, a quote by Amazon’s Jeff Bezos sums up the importance that leading businesses place on test and learn: “Our success is a function of how many experiments we do per year, per month, per week, per day.”

Find out more

Want to know how to get started, assess your current test and learn capabilities, or accelerate your journey? Get in touch and we can help you explore your options.

Author

Darshit Tolia

Managing Consultant, Insights-Driven Enterprise (IDE),

North America

Capgemini Invent