Training AIs doesn’t have to hurt (the planet)

Blog: Capgemini CTO Blog

AI offers great technological promise, including in sustainability solutions, but is very power hungry. The question is, how to train and operate AI solutions in a more sustainable way while harvesting the positive impact AI can have.

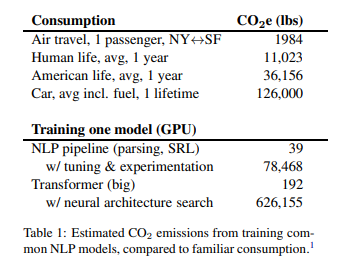

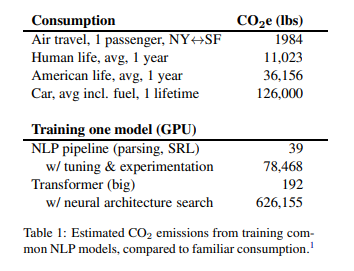

The carbon footprint of artificial intelligence is very real

Today, artificial intelligence (AI) technologies are increasing the effectiveness and productivity of applications by  automating manual activities that originally required cognitive capabilities previously[1] considered unique to humans. However, all of this can come at a hefty carbon cost. Building and training an AI language system from scratch, for example, can generate up to 78,000 pounds of CO2 – or twice as much as the average American exhales over their lifetime – while using a different training method to train an AI algorithm can emit as much carbon as five American cars, including manufacturing.[2]

automating manual activities that originally required cognitive capabilities previously[1] considered unique to humans. However, all of this can come at a hefty carbon cost. Building and training an AI language system from scratch, for example, can generate up to 78,000 pounds of CO2 – or twice as much as the average American exhales over their lifetime – while using a different training method to train an AI algorithm can emit as much carbon as five American cars, including manufacturing.[2]

Of course, AI models don’t just consume electricity when they are trained. Once trained and deployed an AI model draws conclusions from collected evidence and previously trained models (called interference). This activity requires processing power and therefore will also consume electricity during operation.

What does “training” mean?

For any AI tool to work, it needs to be “trained.” AI tools (or, more precisely, machine learning algorithms) build mathematical models based on sample data, known as “training data,” to make predictions or decisions without being explicitly programmed to do so. In other words, it is through training that an AI application “becomes intelligent,” and capable, for instance, of identifying a pattern based on how it was trained.

Training an AI tool can be power intensive

Training an AI tool can be a compute and therefore a power-hungry exercise. In 2018, OpenAI stated that “… since 2012, the amount of compute used in the largest AI training runs has been increasing exponentially with a 3.4-month doubling time (by comparison, Moore’s Law had a two-year doubling period). Since 2012, this metric has grown by more than 300,000 times (a two-year doubling period would yield only a sevenfold increase).”

Advances in AI are mainly achieved through sheer scale: more data, larger models associated with more parameters and/or more compute. In May 2020, OpenAI announced the provision of the biggest AI model in history. Known as GPT-3, it has 75 billion parameters. By comparison, the largest version of GPT-2 had 1.5 billion parameters and the largest transformer-based language model in the world – introduced by Microsoft in May 2020 – is 17 billion parameters.

The increase in compute

Relying on increasingly complex models to train more and more sophisticated AI tools can result in increased compute consumption, which in turn, can increase energy consumption and can expand the carbon footprint. As the 2019 study noted, training a single AI model can generate up to 626,155 pounds of CO2 emissions. Today, there are, of course, smaller AI models that generate less carbon output. However, at the time the study was conducted, GPT-2 was the largest model available and it was treated as an upper boundary on model size. Just a year later, GPT-3 is a factor 50 larger than its predecessor.

There is a general consensus that the relationship between model performance and model complexity (measured as number of parameters or inference time) has long been understood to be, at best, logarithmic; for a linear gain in performance, an exponentially larger model is required, resulting at some point in diminishing returns at an increased computational and carbon cost. In other words, if we do not change the way we design, provision, and operate these networks, the carbon impact will increase.

How to reduce the carbon footprint of AI

There are three main ways to reduce the carbon footprint of AI:

- By increasing the visibility of the potential CO2 footprint caused by training and operating an AI tool

- By changing the way we train neural networks

- By ensuring we minimize the carbon footprint of the compute power consumed.

Increasing the visibility of the CO2 footprint

The ability to measure and report on the CO2 footprint would help increase public awareness of the severity of the problem. In a paper issued in July 2019 by the Allen Institute for AI, the authors proposed using floating point operations as the most universal and useful energy efficiency metric. Another group developed a Machine Learning Calculator, which aims to provide better visibility of the potential CO2 footprint. Also, a team of researchers from Stanford, Facebook AI Research, and McGill University created a tool that measures both how much electricity a machine learning project will use and what that means in terms of carbon emissions. The paper was published in early 2020 and pointed out that using floating point operations (FPOs) might not lead to accurate results.

Changing the way we train neural networks

Earlier this year, researchers from MIT issued a paper contending that their once-for-all (OFA) network could significantly reduce the carbon footprint. According to scienceblog, “Using the system to train a computer-vision model, [the MIT researchers] estimated that the process required roughly 1/1,300 the carbon emissions compared to today’s state-of-the-art neural architecture search approaches.”

Decoupling the divergent relationship between training AI efficiently and compute power by using a different way of building neural networks (for instance, MIT’s OFA network) shows the clear aspiration by the IT industry to reverse the current negative trend.

A much more radical approach was advocated by the AI guru, Geoffrey Hinton, in 2017: “The future depends on some graduate student who is deeply suspicious of everything I have said … My view is throw it all away and start again.” It seems that simply throwing more compute power at more complex AI scenarios is not the way forward. Perhaps we should use the human brain as a reference; comprising only about 2% of a person’s weight, it requires only about 20W to function – barely enough for a lightbulb.

Using environmentally friendly compute capabilities

In addition to changing the way we build and run neural networks, using more efficient and more environmentally friendly compute power could also reduce the carbon footprint. According to the United States Environmental Protection Agency, one kilowatt-hour of energy consumption generates 0.954 pounds of CO2 emissions (US-based numbers). This average reflects the varying carbon footprints and relative proportions of different electricity sources across the US (e.g., nuclear, renewables, natural gas, coal). The aforementioned paper by E. Strubell applied this US-wide average to calculate the carbon emissions of various AI models based on their energy needs.

If, however, an AI model were provisioned on a compute platform that was both extremely efficient (a 1.1 or lower PUE rating) and used only electricity produced by renewables (for instance, wind or sun), it would have a far lower CO2 footprint.

Using a compute platform that is fully efficient (using PUE as a measure), uses only renewable electricity, and considers environmental impact (in terms of procurement, provisioning, and disposal) will reduce the overall carbon footprint. Using cloud computing provided by a public cloud provider that operates a fully efficient and sustainable data center network can reduce the CO2 emissions of the existing learning environment.

Because sustainability is becoming a central subject across the entire IT sector, many cloud-providers publicize both their efficiency ratings and their CO2 footprint per data center online, and a substantial number have either already achieved or have clear ambitions to use 100% renewable energy for their global infrastructure.

Summary

AI has the potential to deliver significant value to us all. Based on our research, more than 53% of organizations have now moved beyond AI pilots, and are already reaping real and tangible benefits. But AI also holds the promise of far wider, global benefits, as it has the capacity to help reduce worldwide greenhouse gas (GHG) emissions by 4% by 2030. However, that value comes, at least for now, at the expense of CO2 emissions and the ensuant environmental impact. We can mitigate this impact by increasing our overall awareness, changing the way we train AI models, and using more efficient and more environmentally sustainable compute capabilities. Or, as Geoffrey Hinton put it, by going back to the drawing board.

More AI-related material can be found here.

For more in-depth and detailed research related to AI, please see the Capgemini Research Institute’s publications on the subject.

[1] For instance, repetitive tasks or non valuable tasks

[2] These are theoretical figures and in reality, today training an AI tool will probably consume less.

Leave a Comment

You must be logged in to post a comment.