The Believability Index

Blog: Tyner Blain

I learned a lesson a long time ago – your level of confidence is irrelevant when estimating outcomes. What matters is the justification for your confidence. The Believability Index is the tool to use to better predict, and thereby improve your outcomes.

Decisions

We make investment decisions to support our strategy, seeking to create customer value and competitive advantage. The nature of those decisions blends investment with imagining. We imagine approaches to exploit an opportunity or solve a problem. Then we evaluate those ideas as investments. At it’s core, we decide if an idea is a good one or a bad one. A good idea or a bad idea for us to pursue at this time.

To evaluate an investment, you must estimate what you anticipate. How much do you expect it to cost? How well do you expect it to work? How much do you estimate it will be worth?

I’ve built and seen a lot of estimates informing those decisions. Estimates inside problem statements, business cases, impact maps. Estimates in every unit imaginable. Currencies, team-weeks, t-shirt sizes, click-thru rates, lifetime value. Estimates built through many processes, from Monte Carlo and Markov models to planning poker and value-effort matrices.

Here’s the thing. Not all estimates are equally believable.

Expectations

As product managers, we have the responsibility of making good investment decisions. We take our insights about customers, our anticipations of competitors, and our appreciation of our constraints and use them to generate ideas. Those ideas are the opportunities we choose to pursue and the problems we choose to solve. We collaborate with our teams to discover what we want to build. Through creating differentiated value for customers, we expect to cause changes in behavior. When our product becomes the better choice for more people solving more problems, we expect more sales, leading to more profits.

The best practice in product management is to develop disconfirmable hypotheses describing these expectations. Most of the time, people are not documenting their hypotheses, and instead state their expectations based on their assumptions. A lot of the epics I see have these implicit assumptions of value. It’s the product operations version of a leap of faith. The “build it and they will come” idea, from the movie Field of Dreams embedded in an operating model. A business has to decide if building something is a good idea. What do you expect it will cost to build it? How many of “them” do you expect to “come” after you build it? How much do you expect profits to increase?

You decide based on your expectation of roughly how many people will come, roughly how much it will cost to build it, and roughly how much you expect to benefit after they arrive. Running a business is not a leap of faith. Running a business is a series of intentional decisions based on your expectations. Your expectations are built on what you believe.

Trust and Implicit Risk

There’s a pattern of collaboration I call “T-shaped” where commercial leaders with a wide breadth of perspective collaborate with product managers who have a depth of insight into the domain; the product, the customers, the competitors, and the company’s capabilities. An important aspect of this collaboration is trust.

The commercial leader has to trust the estimates which come from the product manager. Estimates of expected benefit. Estimates of effectiveness. Estimates of cost. Once the product manager wraps up their analysis, assumptions, and hypotheses into an estimate, that detailed work takes a back seat to the estimate.

The estimate becomes the focus of this trust. This is the ideal collaboration pattern. Much better than the order-giver / order-taker pattern of product managers just shepherding the delivery of whatever the leader demands.

There is however, a challenge. Annie Duke, in Thinking in Bets quotes Jeff Yass, Founder, Susquehanna International Group. “The biggest risk is that you have a losing strategy when you think you have a winning one.” There is an implicit risk that the estimate upon which leaders form their beliefs is wrong.

Decision-making in uncertainty is based upon what we believe at the time of making the decision.

The risk Yass and Duke highlight is that the foundations for our beliefs may not be sound.

Believability

As product managers, we need to form and share an appreciation for the believability of our estimates. Not our confidence in our estimates, the justification for that confidence. I built a tool for this in November, 2017, working through this challenge while supporting product transformation at Ford Motor Company. I still have the photo of the whiteboard sketch I did on November 12th. The challenge I was addressing was to be able to express believability about estimates, to avoid introducing hidden risks into the collaboration.

In the collaboration, there is a focus and hand-off around the estimate. A question is asked to determine how much the estimate should be trusted.

Where most people get it wrong is by asking the wrong question. “How confident are you in your estimate?” is the wrong question.

The research from Douglas Hubbard, author of How to Measure Anything, is clear – people are consistently overconfident about estimates. We operate in an environment of uncertainty, with ill-structured problems, undefined variables, and unexpected scenarios. What Kahneman, Klein, and Gigerenzer all point out to us is that we need to leverage heuristics in our approaches to decision-making in environments of uncertainty. Your confidence about your estimate is irrelevant. Instead, people should ask “What is the justification for your confidence?” Or more aggressively, “Why is this believable?”

What matters is the believability of your estimate, not your confidence in your estimate.

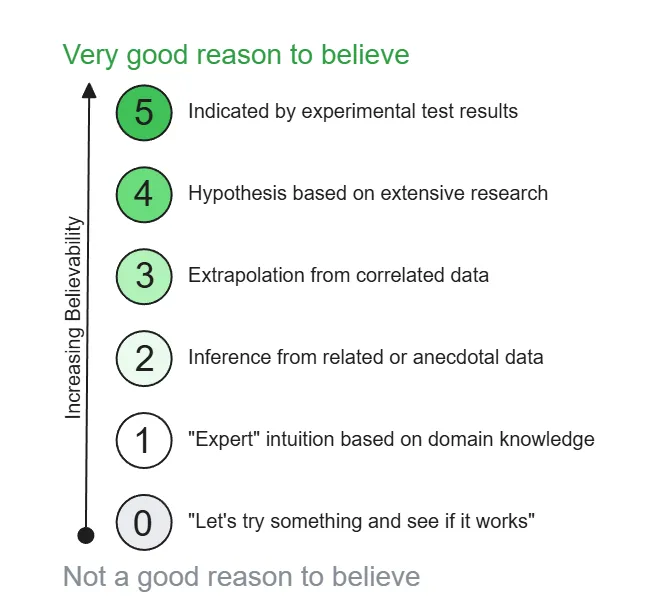

The Believability Index

The Believability Index is the tool I developed and have been using with dozens of teams in the almost decade since. What the index does is apply a heuristic to score the believability of any estimate, based upon what underpins the estimate.

The believability score helps people understand estimates. I view believability in this context as the opposite of uncertainty, and built this index to reflect progressive reductions in uncertainty which inform any estimate or belief. Let’s walk through the scores from least believable to most believable.

0. “Let’s try something and see if it works”

A score of zero reflects complete uncertainty – this is just an idea. The CEO came back from a conference, and saw all the cool kids were doing it. “We need to add AI to our ice scrapers.” Trying things is not bad. A tradeoff in portfolio management is balancing the risk of being wrong with the risk of being late. Sometimes the better decision is to just try something.

However, there is no good reason to believe an estimate built on a ” just try something” idea. The idea can be great. The estimate about the outcome of the idea is nonetheless not believable.

1. “Expert” intuition based on domain knowledge

“Trust me, I’m an expert” is, unfortunately, an effective persuasion technique. It can be the embodiment of bravado. Julia Galef warns against it in The Scout Mindset. Daniel Kahneman explored what he called the illusion of validity which stems from the overconfidence of domain experts. Experts can operate on stale knowledge, and suffer from cognitive biases, particularly in estimation. They also tend to be particularly confident, and Kahneman found this confidence to not be a reliable indicator of accuracy.

Having a domain expert tell you how and why something will work is absolutely more believable than a random idea. But it falls short of data in terms of believability.

2. Inference from related or anecdotal data

Many people, for many years, have been telling all of us the importance of being data-driven. Yup. Completely agree.

I think I first heard Rich Mironov say it; when you are building a product or a feature, you’re building it for the first time. In addition to breaking the assembly-line metaphor and associated misconceptions about product development, the lack of repeatability also means there is uncertainty about the effectiveness of whatever you plan to build. The data supporting your beliefs is not perfect. Whatever data you have is, by definition, data about something else. You have to infer the relevance of that data. Knowing that between 1% and 2% of Evernote freemium users converted to paid subscribers 20 years ago does not assure that launching your AI headshot generation service with a freemium tier will drive similar SaaS economics.

Having some data, which you can imagine being relevant, is better than having no data at all. Your estimate is informed – however weakly – by anecdotal data.

3. Extrapolation from correlated data

Correlated data is data much more crisply “about the thing you are measuring.” If you have data about how many people at a train station coffee shop add cream to their coffee, you can build a correlated estimate about how many people at an airport coffee shop will add cream to their coffee.

This is correlated data. Understanding context of usage is important to understanding customers and their behavior. The fundamental question is “Does this data apply?” and is a somewhat subjective interpretation. I think most people have heard the quote “In theory, theory matches practice. In practice, it does not.” I recently heard as an addition, “The difference between theory and practice is what you don’t understand about the theory.” A reasonable example of correlated data would be to look at growth rates for a product in one market, and start with the assumption that growth rates in a new market will match. Unless your markets are very different. Or very strongly coupled.

Another way to think about extrapolation is to look at the past and expect it for the future. If you’ve been growing share by 5% for the last few years, you could extrapolate the curve to expect another 5% in growth for the upcoming year. This is still tricky – conditions may be changing and a continuation of 5% growth is not assured.

Having data of greater relevance is better than less-relevant data. Data is a good foundation for believability.

4. Hypothesis based on extensive research

One of the fascinating things about developing hypotheses is that the act of considering how you would disconfirm the hypothesis triggers an improvement to the hypothesis. We talk about the benefits of testing hypotheses to enable rapid iteration and course correction. This is true, and it is a key element of becoming outcome-oriented, shifting your orientation from “Did we finish?” to “Did it work?” Even more powerful is the experience of anticipating the future evaluation, acting to apply if not force critical thinking to avoid “being wrong.” Don’t underestimate the power of wanting to avoid being wrong.

You develop data – related or correlated – through research. Increasing the level of rigor of research, formalizing into a hypothesis, increases believability further.

5. Indicated by experimental test results

“We ran an experiment and got the following results.” This is the best reduction of uncertainty I know. Therefore, it is the most believable rationale underpinning an estimate.

You cannot completely eliminate uncertainty. You have to – at a minimum – assume the people you experimented with are representative of the people you are trying to understand. Your experimental design will introduce opportunities for what you observe to misrepresent what you will expect. I had a client who used ring-releases, launching features to 1% of the user population, to minimize the risk of doing harm and to gather data about potential benefits. The team expected the results of releasing to 100% of customers to be nearly-perfectly predicted by the results of the 1%.

At a minimum, you have to ask if the results from the past experiment will still apply in the future. The conditions will change. Your new proposition may be a differentiator at the time of launch, but a competitor’s rapid move to neutralize your advantage could still undermine your business case.

This is why there is no certainty, only believability.

Using the Believability Index

The believability index helps us address the hidden flaw in a trust-based collaboration. Without the index, the person making a decision based upon an estimate has no information about the believability of the estimate. Do they consider it to be a sure thing, or a long-shot? They may sandbag or inflate expectations, biasing their decision. As a product manager, when building an estimate, don’t just share a clinical set of numbers.

Apply the believability index to characterize those numbers. You will make better decisions when you share both an expectation (your estimate) and a characterization (believability score).