From Knowledge Sources to Executable Decision Models (DC2023 Notes Part 1)

Blog: Decision Management Community

Considering the current explosive nature of Generative AI, it was only natural to expect that words such as LLMs, ChatGPT, OpenAI will be dominant at DecisionCAMP-2023 as well. In the world of Decision Modeling, it means that these new GenAI tools can be used to automate the less automated process of auto-generation of business rules and test cases from various knowledge sources. IN this post I will briefly describe the related presentations and discussions.

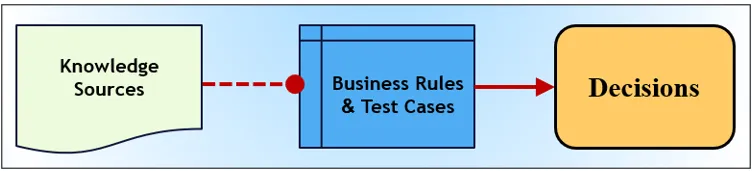

If we follow an intentionally simplified definition of DMN as “3 shapes and two lines” offered in James Taylor’s presentation, we may present the decision modeling and execution process as in the following picture:

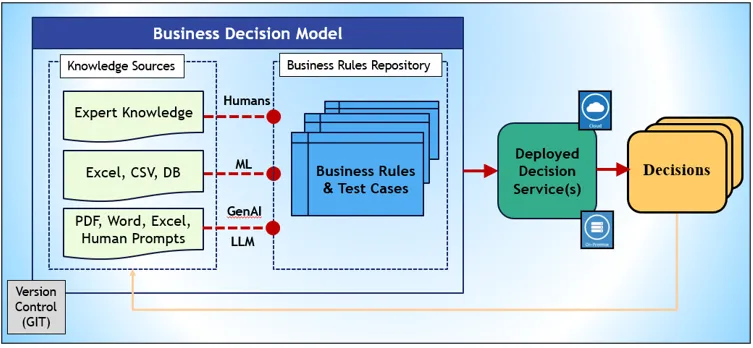

While the second line from “Business Rules” to “Decisions” is well-automated by different BR&DM tools, many presentations with GenAI in mind concentrated on the first line from “Knowledge Sources” to “Business Rules and Test Cases”. To talk about these presentations and related discussion, let’s expand the above simplified schema with much more details:

We split Knowledge Sources into 3 types:

- Expert Knowledge in minds of subject matter experts (SMEs)

- Historical Data available from Excel or CSV file, and from databases

- PDF/Word/Excel Documents and Human Prompts.

Expert Knowledge. Until recently, the creation of Rules Repositories remained mainly a prerogative of humans (usually subject matter experts). Different DMN-like tools offer great graphical interfaces to assist SMEs in the creation of rules, tests, and testing/debugging decision models, with the consecutive deployment of them on cloud or on premises.

Historical Data. Different Machine Learning (ML) methods and tools are being used to discover business rules from historical data and to automatically generate rules in executable formats. Guilhem Molines presentation was devoted to this topic with a nice demo that utilized AIMEE (AI Model Explorer and Editor tool). During the discussions, people mentioned similar tools and approaches from FICO, OpenRules, Sparkling Logic, and others. James Taylor described how DMN helps to migrate from frequently failed stand-alone Machine Learning projects by using the above schema and starting with Decisions.

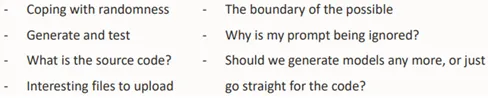

PDF, Word, Excel Documents and Human Prompts. After GenAI tools such as ChatGPT were introduced in Nov-2022, it became possible to use natural language documents to generate business rules in DMN or JSON formats and/or even the corresponding executable code in Python/Java. First, Gary Hallmark did a demo that generated an executable decision model for the well-known Loan Approval problem described in the DMN Chapter 11. To avoid limitations of the current versions of GenAI tools such as ChatGPT-4, Gary actively used human prompts. Gary managed to generate a working decision model and pointed to problems he faced doing it. Gary brought important questions to be discussed:

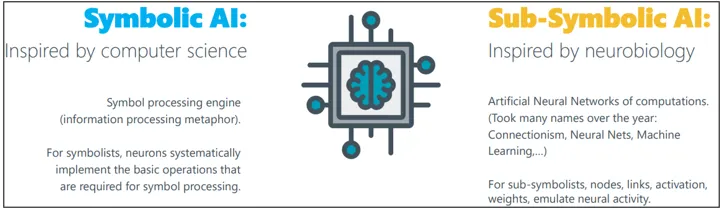

Then Denis Gagne provided an interesting classification of Symbolic and Sub-Symbolic AI approaches:

He also demonstrated the integrated use of ChatGPT and Prompt Engineering within Trisotech decision modeling framework showing how to use PDF-based knowledge sources. Later, Tom DeBevoise and Denis did a very interesting live demonstration of how they used GenAI for automatic analysis of regulatory PDF documents to extract elements of the business glossary and use them by business rules. They had shown that such knowledge sources may become an essential part of decision models included in the version control system and how they plan to utilize upcoming GenAI capabilities.

Jan Vanthienen and Alexandre Goossens from KU Leuven concentrated on questions:

- Can GPT build Decision Models from text?

- Can GPT build Decision Requirements Diagrams (DRDs)?

- Can GPT build Decision Logic Tables (DTs)?

- Can GPT make decisions and provide explainability to decisions?

Their answers were only partially optimistic and you may find more information in their RuleML paper.

During the Q&A Session on Sep 18 we discussed these questions plus

- What does represent the source code now?

- Are PDF documents and human prompts included?

- Can decision model learn from its own decisions? (see the yellow arrow from “Decisions” to “Knowledge Sources” on the above schema)

I also pointed out that our Decision Management Community is better positioned to produce practical results from GenAI technology because we concentrate on very specific problems in different vertical domains such as Health Care or Financial services. We may utilize the Natural Language processors coming from Large Language Models (LLMs) but at the same time we may produce domain-specific Small Language Models (SLMs) based on trusted sets of documents and predefined concepts and their relationships.

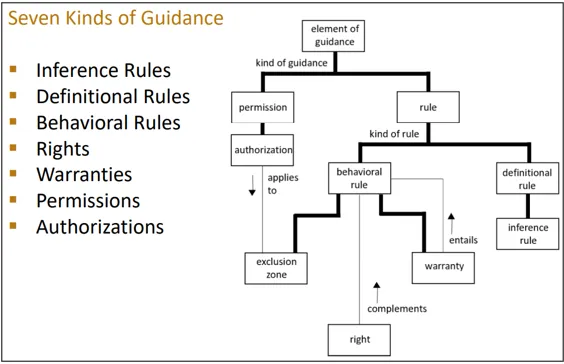

There were two presentations about foundational rule, decision, and process concepts in the modern AI-Sceptic and/or AI-Optimistic world. Alan Fish talked about Human Values and the business intervention model. Ron Ross presented his “anatomy” of the very concept of the business rule:

Naturally, the use of Generative AI in the Decision Management context was in the center of discussions during our Interactive Panel on Sep 19. There were many important statements from our experts, e.g. “You cannot put over there an automated decisioning system that cannot explain its decisions.” But you better listen to their opinions and dos and don’ts advises.

Here I just want to stress several points about the “friendly enough” language for decision modeling. Gary Hallmark, the author of FEEL, remembered spirited debates in the early days of DMN when people argued if DMN even needed an expression language.

“Of course, no formal language is going to be friendly-enough”, said Gary. “I didn’t see a way out of that until the world flipped on its head almost a year ago and we got these LLMs… You describe your decision logic in natural language… and what could be friendlier than that? However, we need to deal with hallucinations, all sorts of gibberish, and the necessity of independent verification.”

Then Carlos Serrano-Morales said:

“Natural language is ambiguous, that’s why nobody does mathematics in English. There has to be formalism. I do think we will see a rise of LLM-based tools that are going to try to explain the things but they all will go back to how you explain the logic in the first place.”

Ron Ross tried to defend the natural language and posted this question at the very end of the panel: “If formal models are required for the interpretation of laws, contracts, regulation, etc. then why aren’t these things written in formal models in the first place? (It will never happen. Natural language, for its shortcomings, is essential for groups and communities of people.)” Unfortunately, Ron’s question remained unanswered while people resolve ambiguities in legal documents by issuing clarifications, and this process seems to be similar to ChatGPT prompts. I hope with Generative AI advancing so fast, we will see real progress in this direction sooner than we might expect today.

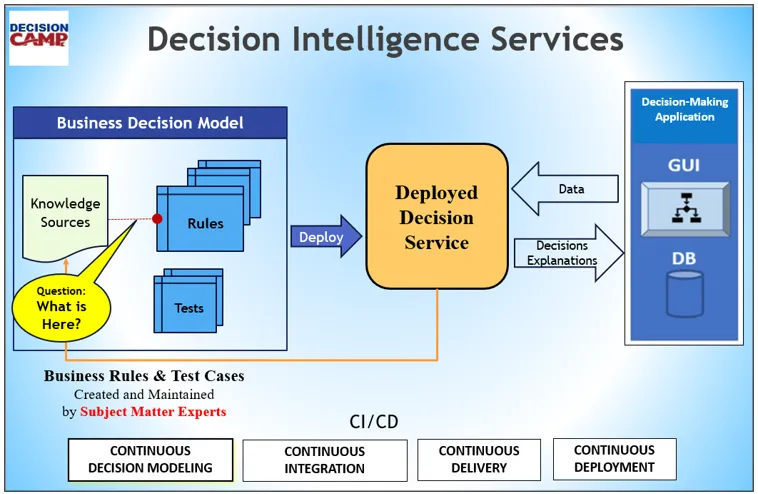

In my Closing Remarks on Sep 20, I tried to put some architectural schemas that summarized how the design of modern Decision Intelligence Services is influenced by the Generative AI: