Entity Framework : A short introduction

Introduction

The entity framework is an object relational mapping tool that is well integrated into the .NET development environment. It allows for the conceptual modelling of a physical data store that can be used with your applications. Data sources are represented as objects so its much easier to incorporate data entities into your logic.

The problem with older data access technologies is that they did nothing to bridge the gap between the relational set based format of the data and the strongly typed object oriented logic that needs to access that data. Supporting large data reads for example required considerable code written into the logic of the application to load the data in and persist it back to the data store, this affected performance, caused issues with the data type differences and generally contributed to poor design. The Entity Framework is a bridge between the data tier and the application logic.

Entities are represented as partial classes, this is to allow you to extend your entity by creating another partial class with the same class name

Differences between object orientated logic and relational data stores

Data Type Differences

– Nvarchar(20) has a limited size, but string allows upto 2gb of data

– Sql_varient only loosely maps to .net object

– Binary data in the database doesn’t quite map to a byte array in .net

– Date and time formats can become an issue when mapping between DB and .NET

Relationship Differences

– The database uses primary / foreign key relationships. These relationships are stored in a master database table.

– .NET uses object references (linking to a parent object from the child objects (in a child object collection))

Inheritance Differences

– .NET supports single object inheritance (have a base object with shared features and then then derive from the that base object for creating similar objects)

– Makes code simpler and easier to debug

– Relational databases do not support databases, tables cannot be inherited by other other tables

– Modelling a database that attempts to emulate some kind of table hierarchy (inheritance) can cause issues and introduce very complex entity models in .NET

Identity / Equality Differences

– In the database, the primary key constraint (unique column) is the identifier of a row object

– When comparing objects in .NET that have had the same data loaded from the same table, these objects are NOT equal, even though they hold the same data (only when the variable REFERENCES the same object… equality by reference… is the object EQUAL to another). So loading the same data from the database (the same row) will still not seem equal in the logic.

Handling these differences : Object Relational Mapping

You can write a lot of complex code to try and iron out these object/relational differences when working with data from your logic… or you can use a framework, such as the entity framework to act as an intermediate layer that focuses on resolving the issues highlighted. This intermediatry is called, Object Relational Mapping. That means that the relational database object is MAPPED to an OOP object. That mapping solves the differences highlighted. That means it is a back and forth mapping, meaning your object based changes can be written back to the database.

Microsoft’s answer to handle these differences

Microsoft’s primary ORM tool, now supported within the framework is the Entity Framework. This is Microsofts primary data access strategy, released in .NET Framework 3.5. Microsoft are investing a lot of cash into the development of Entity Framework so it is the recommended data access method.

LINQ to SQL is a similar technology developed by Microsoft and also benefits from the LINQ syntax, but Microsoft decided to take Entity Framework forward and leave LINQ to SQL as is, with no further development planned.

Benefits of using ORM

– More productive. Less complex code writing required to manage the differences between objects and relational data

– Better application design. Adding complex code can complicate the design. Introducing a mapping layer, maintains the n-tier application stack

– Code can be re-used (the model can be saved off as its own project that is used by many applications or parts of the same application).

– Much more maintainable that custom code.

– Lots of different ORM tools (NHibernate was an older ORM tool that could be used with .NET, based on a port from the java Hibernate ORM)

Note that, a small performance hit is required to execute mappings, but the other benefits of using ORM far outway the cons.

Generic Data Objects vs. Entity Objects

ADO.NET has long used generic data objects such as SqlConnection, SqlCommand, SqlDataAdapter, SqlDataReader, DataSet etc. Together these objects allow a means for getting data from a data store and the writing back of data changes to that data store. The DataAdapter object monitors the DataTable for changes and writes those changes back. Whilst these objects do a great job, there are a few issues with using generic data objects like these:

Generic Data Objects

– Tight coupling between application and database because the app code needs to know the structure of the data in the database. So changes to the database can cause problems and code updates may be needed.

– Older DataTables contain columns with loose typing and you would have to convert the column value to a strong type using the Convert() method (although typed data sets resolve this to an extent)

– Extracting related data (data tables in a data set) whilst possible introduced some complexities.

– Unecessary looping of in memory data is needed to find values (unless LINQ to DataSets is used)

– Generally more code needs to be written to extract bits of data at difference times from DataSets.

Entity Objects

– The ORM takes care of the strong typing from relational DB type to object type. This means you don’t have to be concerned with running expensive conversions on the returned data.

– The compiler checks the types at runtime

– More productive to work with as you have to write less code in order to work with the objects. Intellisense provides a much quicker way of accessing the columns in your data objects when writing LINQ for example.

– You are programming against a model, rather than custom code specific to the application

– You’re no longer working directly with the data store in your application logic, just normal objects, therefore decoupling the two.

– Is a layer of data abstraction.

Entity Framework Benefits

So what’s the benefits of using the Entity Framework? – Plenty of reasons.

1) It’s API’s are now fully integrated into Visual Studio so available to add to your projects

2) Uses LINQ as its query language, which again is integrated into the language

3) It’s independent of the data store

4) It abstracts data layer from the logic layer

Note, you might not need to use the Entity Framework for more basic data requirements. Simple reads of data from a few simple tables will not require you to setup entity models. Entity Framework is useful when you are working with more than a few tables within an application.

Entity Data Model

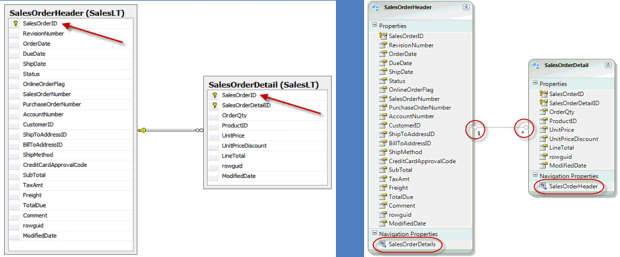

The entity data model, is the structure of the business data, but reshaped into objects usable by the programming language. It describes the structure of the entity data objects that make up the model and also the relationships between this entity objects.

It is made up of 3 main chunks of XML in a single data model file, with the extension .edmx:

– Conceptual model (the object orientated structure)

– Storage model (the physical database storage structure)

– Mapping data (the mappings between the two models above)

Creating a Model

1) Create a VS project targeted at .NET 3.5

2) Add a new folder to that project called ‘DataModels’ (good to keep your models separate from your code)

3) Add a new item to that folder, specifically an ADO.NET Data Entity Model, this will start the model wizard.

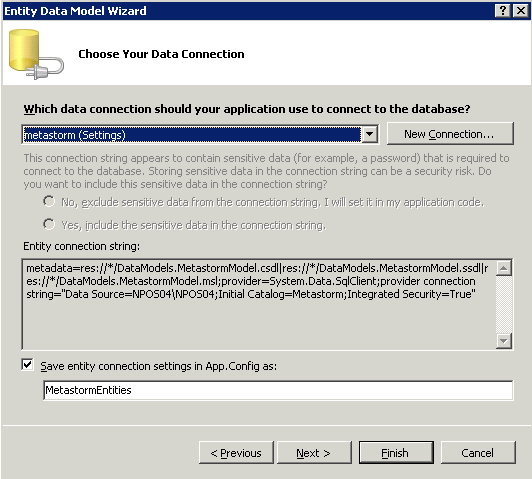

First you can select whether you want a blank model or to read from an existing databases schema, that’s what we’ll do. Next, you specify the connection string to use to connect to the data store. This then uses that connection string to build an entity framework connection string (contains the normal ADO.NET connection string, but also some other metadata). The ‘metastorm’ connection string I’m using here is pulled from the App.Config file in my project.

At the bottom of this screen, the MetastormEntities is not just the name of the connection string that will be saved to App.Config, it’s the name of the entity model, so should reflect the name of the database you are pulling your entities from.

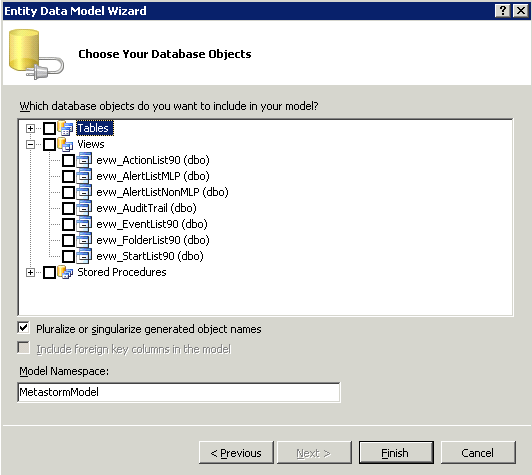

Next, the database specified by the connection string is read and its schema is presented to you so you are able to select the database objects you wish to model. Tables, Views or Stored Procedures (incl. Functions):

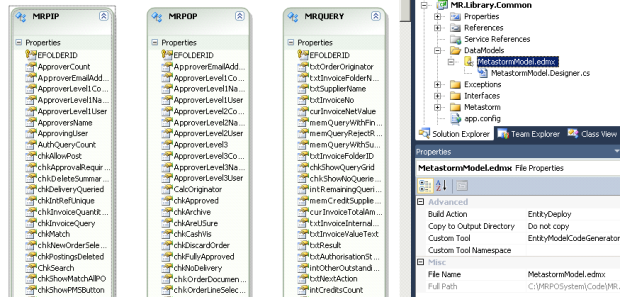

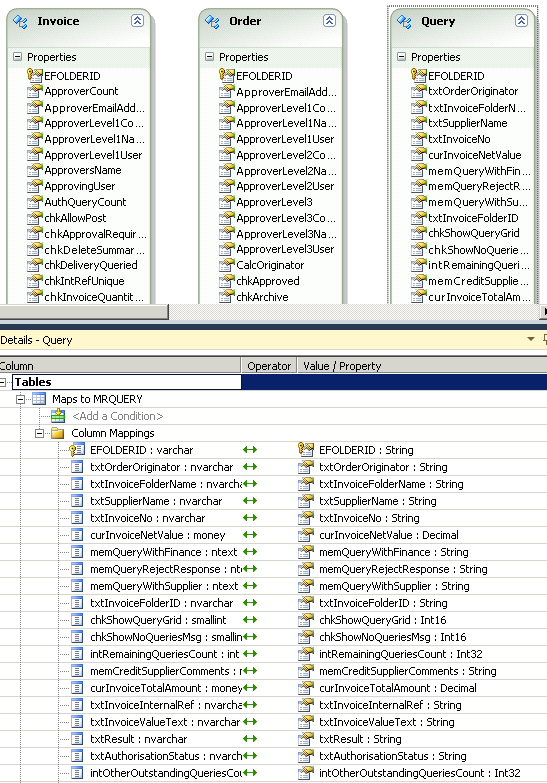

I select 3 of my local tables, MRPOPS, MRPIPS and MRQUERY and click finish. This creates my .edmx model called MetastormEntities (as specified earlier):

By right clicking each entity you can view the mapping from the physical table to the conceptual entity, including the data type conversions. You can edit the names of the local properties that map to the database column names :

You may notice that I have renamed the entities as follows:

MRPOPS is now called Order (a singular entity) with an EntitySet name of Orders

MRPIPS is now called Invoice with an EntitySet name of Invoices

MRQUERY is now called Query with an EntitySet name of Queries

This will make working with the tables easier in code and leaves the actual table names intact.

Looking into the EDMX Model

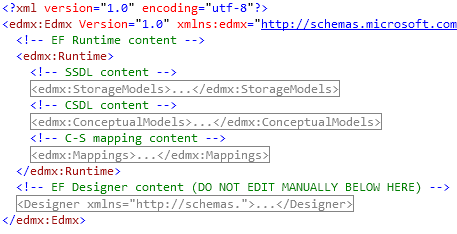

As previously mentioned, the .edmx model is actually an XML file that is rendered graphically by visual studio to show the conceptual model. In order to view the 3 parts of the .edmx file (physical storage, conceptual model and mappings) then right click the model and select ‘..open with’ and select the XML Editor. This is a collapsed view of my model file that shows the 3 parts (with the edmx namespace):

The runtime here contains the metadata about the data, including the mappings.

The designer section is used by visual studio in order to display the entities properly graphically.

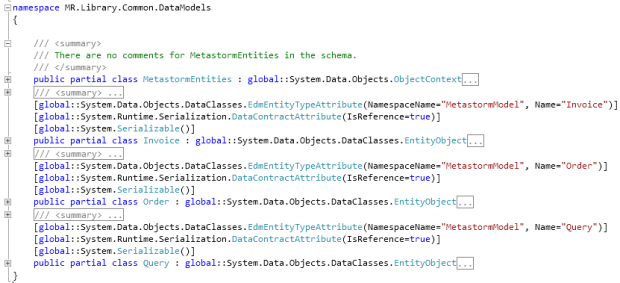

Looking into the Model Designer cs

The designer.cs file contains the partial classes that represent each entity as well as the model itself:

Using Our MetastormModel.edmx

In our project, add a new class and make sure we add reference to System.Data.Entity in that class. Ensure that the System.Data.Entity.dll has been added to your project references. It should been added automatically but best to check. Because we have our class in the same project as our model, we do not need to copy our App.Config out into another project that might need our model.

We can now instantiate our model and start querying our tables (note the looping in the below example is unnecessary but illustrates how to access each order in the query results:

//Create an instance of the model

MetastormEntities metastorm = new MetastormEntities();

//Query the orders entity for doc generation errors

var SelectedOrders = metastorm.Orders.Where(p => p.txtDocGenResult.StartsWith(“E” ));

foreach (Order order in SelectedOrders)

{

return “Order Folder with ID ” + order.EFOLDERID + ” has had a document failure!” ;

}

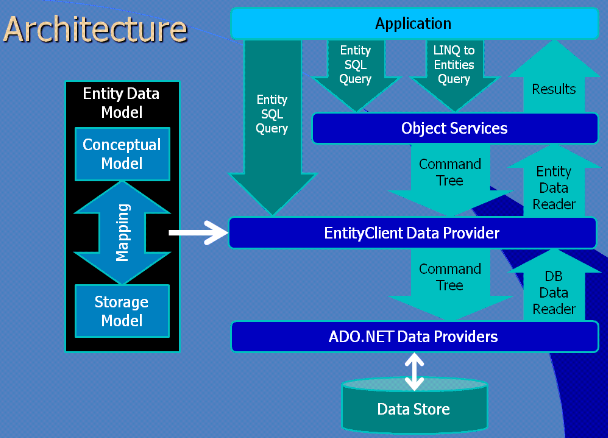

Entity Framework Architecture

Leave a Comment

You must be logged in to post a comment.