Data Visualization Testing

Blog: Indium Software - Big Data

Introduction

Data visualization takes a very important part in the business intelligence / data analytics since this is the part where the customer will view their required outputs / results. Data visualization is usually called as the pictorial / graphical representation of any data. In Big Data, the data visualization tools are used to research and analyze the huge or massive data / information and make it as pictorial representation for end user to access them for their further research or to get their desired output through drill-downs.

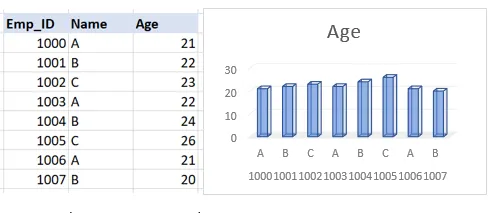

Here are a few main items / elements present in the Data visualization:

- Graphs / Charts

- Tables

- Filters

- Navigation buttons

- Notes

Etc.

Here are some techniques in Data Visualization:

- Box plots

- Heat maps

- Histograms

- Charts

- Tree maps

- Network diagram

Etc.

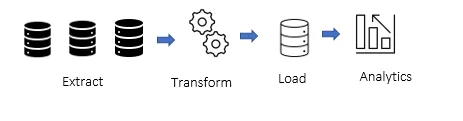

Before getting these items / elements, we have a process called ETL to get the required table to visualize. We have three stages in the ETL process. They are:

- Extract – Extract the data from the source / raw database

- Transform – Improve the quality of the data and make it consistent

- Load – Load the data to the target database

We get the target database to visualize the required outputs / results using the BI tools like Tableau, Power BI, etc.. We can call the output / results as “Dashboard” / “View” in the BI tools.

Contact us for your software testing needs and more!

Get in touch

Data visualization testing

The main aim of any validation is to meet the requirement and we can follow the same testing process which we are following for the web testing, but the testing methodology differs here. Frankly speaking, it is very hard to validate the data and analytics when the respective persons who are going to validate are not subject matter expects on how the dashboards / views / data are developed and most of the time, the data by itself is incorrect. Usually, this can be overcome by understanding the proper requirements from the respective experts like business analyst, business economist, data scientist, etc..

There are many approaches to validate the dashboards / views. In general, we have two parts to validate the dashboards are UI and Data.

UI:

Here are a few common scenarios to validate the UI part:

- The default elements (for example: title, sub-title, filters, tooltip info etc.)

- Type of the filters (for example: multi-select drop-down or single select drop-down)

- Behaviour of the filters (for example: Context filter)

- Resolution / Font style & size / Colors

- Legends order

- Notes / valid error message

Etc.

Data:

Here are a few common scenarios to validate the Data part:

- Compare the values between the database and dashboard

- Validate the calculations and logics used

- Check decimal places / rounding

Etc.

In the Data part, for validating / comparing the values between the source and the output, we have multiple approaches which we can choose based on the project need:

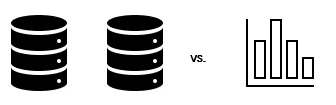

Raw database vs. Dashboard:

We must build the output / source table from the Raw data using SQL (we have to replicate whatever logics are used by the ETL team to get the Aggregated / Output table) to validate the dashboard. Once we have the source table for the dashboard, we need to build the further logic / calculation to validate the specific charts / tables in the dashboard using Excel Pivots or SQL.

Aggregated / Results table vs. Dashboard:

We just need to build the output / source table from the Aggregated / Results table (no need for creating the logics since it is already done by the ETL team) to validate the dashboard. Once we have the source table for the dashboard, we need to build the further logic / calculation to validate the specific charts / tables in the dashboard using Excel Pivots or SQL.

Previous version vs. latest version:

In some projects, they are planning to improve the performance or upgrade the BI tool version. In this case, we should validate the data between the versions. Just downloading the values from both the versions for the same combinations and compare the values between them using Excel (usually it is called as Test Harness – it has source table in the left and latest table in the right and using the formula (source – latest = 0) to get the output.) Etc.

Note: The above methodologies are used commonly but we might have more methodologies than these.

Common bugs in the Data visualization testing

UI bugs

• Mis-order of legends

• Irrelevant color

• Missing data in tooltip

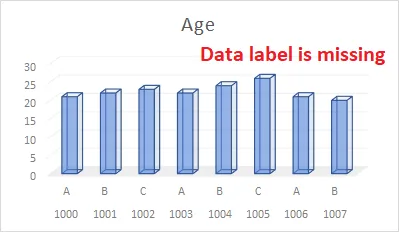

• Missing data labels

Etc.

Data bugs

• Mistakes in calculation / logic

• Issues in Decimal / rounding

• Backend filter is not applied

Etc.

Conclusion

The World is already transforming to digitalization and the data is already taking a vital role in any businesses and making the data as pictorial / graphical (i.e. Data Visualization) which makes the digitization faster than before. If we need to create the quality product to make the brand, there should be quality check before handing over the product to the respective client or customer. Welcome to our digitized world and eagerly waiting for the upcoming features in digitization / visualization.

The post Data Visualization Testing appeared first on Indium Software.