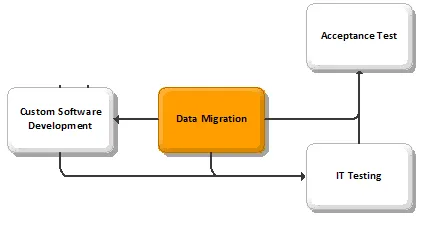

BPI Data Migration – Build Phase

Blog: Biz-Performance, David Brown

BPI Data Migration – Build Phase

Description

- The transfer of all relevant past data from the 'legacy' (current) IT system to the new IT system. Other pertinent data that may previously have been stored on paper files are also entered into the system to enable it to become fully operational.

Client Value

- The new IT system will not be operational unless all required data has been entered into the system. If data migration is not done properly, system performance will be severely hampered because users will not have easy access to the information they require to do their jobs.

Approach

- Build a data model and/or a data dictionary in order to:

- Record the structure of the existing systems and data (as needed depending on level of detail already recorded in “As-Is” Technology Architecture).

- Document how data needs to be structured in the new system. (This should be restricted to 'logical' needs and not be used to specify 'physical' design approaches.)

- Compare the data structures of the proposed solutions against the client organization's needs

- A data model (at either a summary or a detailed level) shows the relationships between the various data entities involved in the business solution. Data models are commonly used when:

- An 'enterprise' data model exists to provide a high-level view of how company data is organized

- The overall solution is formed by several integrated components (only some of which are packages)

- The technical solution is part of a larger corporate database, and overall control must be maintained

- Internal client standards require use of data models.

- Some or all of the model may already exist as a result of:

- Work done during the requirements and selection segments.

- The client organization's pre-existing enterprise data models

- Standard models reflecting the structure of the chosen packages.

- A data dictionary defines precisely how data are accessed and used. They can be used both as an aid in designing documentation and as a software development support tool saving coding time and ensuring consistent definition of the data structures.

- Determine the data to be converted

- Identify key data required to meet the prescribed 'functional' requirements and designs. Very often, this evaluation will be done in parallel with the detailed design of the functions. This exercise denotes the desired functional content of the information, requirements for detail versus summarization, timing, anticipated average/peak volumes, priorities, importance, etc.

- In order to minimize data entry costs of historical paper documents, it is vital to determine how far back in time data conversion activities should reach .

- Undertake a study of the availability and 'integrity' of the data. Make recommendations for securing the data and conduct any appropriate clean-up activities.

- Data availability and integrity are cannot be assumed. Existing data are rarely of good quality and often are insufficient for the needs of the new system. Consider the following options for clean-up activities:

- Manual validation and correction (by user departments or using outside clerical assistance)

- Automated validation / manual correction (by user departments or using outside clerical assistance)

- Automated correction (e.g. postal codes)

- Ignoring current data and collect new data from scratch e.g. full inventory of assets (by user departments or using outside clerical assistance)

- Converting/supplementing data using tables etc.

- Define a data conversion strategy that outlines:

- Requirements for data data items, quality/accuracy required, extent to which items are mandatory

- Options available e.g. various sources/ways for supplementing, validating, correcting, converting and/or loading the data

- Recommended approach

- Details of how to implement that approach.

- Identify desired approach to converting or loading the data. Options include:

- Automated conversion using custom programs by the project team, the client's MIS department, the package vendor or by a third party contractor

- Automated conversion using package facilities

- Manual data entry using the package (performed by user departments or with external clerical assistance)

- Manual data entry in some other computer system followed by automated transfer (performed by user departments or with external clerical assistance).

- The overall data conversion strategy should be reviewed and signed off by the project sponsor and other key decision-makers.

- Undertake data conversion activities. Collect, prepare and load start-up data, including:

- Master file data (e.g. current account balances).

- Transactions in progress

- Historic information (if required).

- If required, begin manual data entry activities at this time. Depending on the size of the organization and resources dedicated, these activities can last weeks or even months.

- Conduct data conversion tests and obtain client acceptance for successful data conversion.

- Undertake conversion while the old system is 'frozen' and no 'live' business is being conducted. Accordingly, complete the process, verify the data and sign off the start-up data as rapidly as possible.

Guidelines

Problems/Solutions

- It is often of limited benefit to produce data models or data dictionaries in a simple, package-driven implementation, since the data design of the application is often fixed at a technical level while the business-function level design work is straightforward. Do not allow the project team to waste time creating data models if no particular benefit is to be derived.

- Be aware that the exact format of a data model or data dictionary depends on its origin. However, typically, data models are shown in the form of 'entities' and 'relationships' (e.g. 'entity/relationship' model or a 'Bachmann diagram'). A data dictionary is frequently in tabular format, with alpha-numeric sequence listing all recorded data types, plus data attributes. It can normally be used to introduce data definitions that are incorporated automatically into programs.

Tactics/Helpful Hints

- Data requirements identified should include the extent to which accuracy and completeness of data are necessary. For example, historic management information may not need to be entirely accurate, but the records of employees' salaries must be absolutely correct.

- The two prime methods used to load data are manual data entry and the running of automated conversion programs:

- Automated Conversion Programs

- If appropriate, data conversion programs are defined, designed, developed, tested and ultimately accepted. They are often only used once for 'live' purposes (although test or 'dummy' conversions may be conducted beforehand). In some cases, these programs are developed to lower standards (e.g. less documentation and testing). Completing the conversion within time and resource constraints is a common concern, therefore conversion programs often include reporting functions to validate that the work has been correctly performed.

- Manual Data Load

- Since it is sometimes more efficient to use this off-the-shelf approach rather than to develop and test a major suite of programs, manual techniques may be used to enter 'live' start-up data . Normally, the package's standard data-entry facilities are used. This has the advantage of invoking the package's full validation routines.

- For very high volumes and high productivity, it may be possible to set up an alternative form of manual entry, for example, using a 'key-to-disk' system to set up batch records for processing through the system. Combined with specialist data-entry staff this can provide a very rapid manual take on of data.

- Manual techniques suffer from a high error rate. Appropriate measures should be taken to validate the data, to minimize the number of errors and to minimize the impact of those errors. It is, however, normal to assume that data is never 100 percent accurate and the business risks of having a small percentage of invalid data should be balanced against the costs of obtaining perfect data. It is important that the start up of the new system is not unreasonably delayed by errors that can be resolved after it is live. Take note of any non-critical errors that are detected so that they can be addressed as soon as possible after live start up of the system.

- When validating data conversion activities, pay attention to cross-checks, particularly where the data have been constructed without the normal processing/validation of the package. For example, verify that account balances are in balance, and that financial totals in other systems match control totals in the general ledger (e.g. total stock values, total creditors, total debtors, etc.)

Resources/Timing

- —