Abuse Cases – What Could Go Wrong?

Blog: Form Follows Function

Last week, in a post titled “The Flaw in All Things”, John Vincent discussed the problem of seeing “the flaw in all things”:

It’s overwhelming. It’s paralyzing.

I can’t finish a project because I keep finding things that could cause problems. I even mentioned this to our CTO and CEO at one point when we were trying to size some private deploys of our stack.

I couldn’t see anything but the largest configuration because all I could see was places where there was a risk. There were corners I wasn’t willing to cut (not bad corners like risking availability but more like “use a smaller instance here”) because I could see and feel and taste the pain that would come from having to grow the environment under duress.

I’m frustrated with putting everything in Docker containers because all I see is having to take down EVERYTHING running on one node because there’s may be a critical Docker upgrade. I see Elasticsearch rebalancing because of it. I see Kafka elections. Mind you the system is designed for this to happen but why add something that makes it a regular occurance?

I can certainly sympathize. For what it’s worth, it sounds like those making the trade-offs he’s worried about could stand to be a bit more inclusive. That doesn’t necessarily mean the decisions would change, but at least being heard and knowing the answers to his questions might reduce some of the stress (not to mention perhaps helping out those responsible for the decisions).

When making design decisions, having (or, at least, having access to) this level of knowledge and experience has a great deal of value. As I noted in “NPM, Tay, and the Need for Design”, you have to consider both the use cases and the abuse cases for a given system (whether software or social).

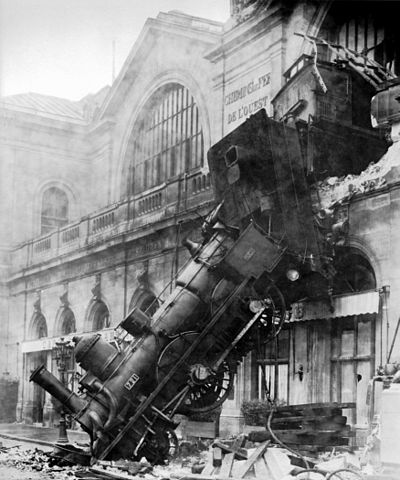

It’s not possible to foresee every potential flaw and it probably won’t be feasible to eliminate every risk that’s discovered. That being said, it doesn’t mean time spent in risk evaluation is wasted. Dealing with foreseeable issues before they become problems (where “dealing” is defined as either mitigating it outright or at least planning for a response should it occur) will work better than figuring it out on the fly when the problem occurs.

Understanding why “Should I?” is a more important question than “Can I?” is something I’ve touched on before. Snapchat is finding this out by way of a lawsuit over their filter that allows users to record their speed. Who would have thought someone might cause an accident using that?

Trial and error/experimenting is one method of learning, but it’s not the only method and is frequently not the best method. Fear of failure can hold back learning, but a cavalier attitude toward risk can make experiments just as, if not more costly. It’s the difference between testing a 9 volt battery by touching it to your tongue and using the same technique on a 240 volt circuit.

Leave a Comment

You must be logged in to post a comment.